The Formative Assessment Systems for ATE project (FAS4ATE) focuses on assessment practices that serve the ongoing evaluation needs of projects and centers. Determining these information needs and organizing data collection activities is a complex and demanding task, and we’ve used logic models as a way to map them out. Over the next five weeks, we offer a series of blog posts that provide examples and suggestions of how you can make formative assessment part of your ATE efforts. – Arlen Gullickson, PI, FAS4ATE

Week 2 – Why who you invite to your professional development makes a difference for your results.

What would happen if you hosted an event and were careless regarding the invitation list? You’d probably get plenty of people to come, but they might not be the ones you wanted to participate in order to make the event a success…and showing sheer numbers alone doesn’t indicate success.

At the National Convergence Technology Center, we offer professional development events called Working Connections. The purpose of these week-long institutes is to prepare community college faculty to teach new IT topics in upcoming semesters.

Who would be the “wrong” people to invite to Working Connections? Anyone BUT community college IT faculty!

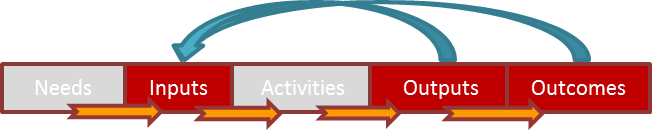

When you are working on the input section of your logic model, sometimes you need to look at the outputs and outcomes sections first (i.e., what kind of output and outcome do you want and what inputs are needed to achieve those?) In our case, we wanted to show impact from professional development of IT faculty. For example, did the faculty actually teach the courses they learned about at Working Connections? How did this training impact the way they teach? How did Working Connections sessions impact the students these professors taught? Did these new skills impact student learning?

We gather data from attendees at the completion of each Working Connections (overall and topic track surveys), then we follow up with longitudinal questions at six months, 18 months, 30 months, 42 months, and 54 months after the event.

When we first implemented the surveys, we noticed that some of the participants had not planned to teach the track they studied at Working Connections. We wondered why this was so, and we looked at a variety of possibilities and soon discovered that some of our registrants were not IT community college faculty.

We instituted a simple step in the registration process to verify the participant’s job, which was comprised by two items on the registration form: (1) Please provide your supervisor’s name, title, phone number, and email; and (2) What IT/convergence classes do you currently teach or supervise (Working Connections is intended solely for IT/convergence faculty or academic administrators.)

Soon our impact data started trending upward. We also highlighted this requirement in BOLD on our event website: http://summerworkingconnections2014.mobilectc.wikispaces.net/home.

In a practical sense, we also wanted to make sure that the money we invested in the event was going toward the right target. Ensuring that we had the “right” people come also ensured we were getting the best bang for the buck.

It seems like such a simple thing, but examining who you are involving in your program events can make a big difference in the success of your project.

Except where noted, all content on this website is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

EvaluATE is supported by the National Science Foundation under grant number 2332143. Any opinions, findings, and conclusions or recommendations expressed on this site are those of the authors and do not necessarily reflect the views of the National Science Foundation.

EvaluATE is supported by the National Science Foundation under grant number 2332143. Any opinions, findings, and conclusions or recommendations expressed on this site are those of the authors and do not necessarily reflect the views of the National Science Foundation.