Evaluators contribute to developing the Advanced Technological Education (ATE) community’s awareness and understanding of theories, concepts, and practices that can advance technician education at the discrete project level as well as at the ATE program level. Regardless of focus, project teams explore, develop, implement, and test interventions designed to lead to successful outcomes in line with ATE’s goals. At the program level, all ATE community members, including program officers, benefit from the reviewing and compiling of project outcomes to build an evidence base to better prepare the technical workforce.

Evidence-based decision-making is one way to ensure that project outcomes lead to quality and systematic program outcomes. As indicated in Figure 1, good decision-making depends on three domains of evidence within an environment or organizational context: contextual experiential (i.e., resources, including practitioner expertise); and best available research evidence (Satterfield et al., 2009)

Figure 1. Domains that influence evidence-based decision-making (Satterfield et al., 2009)

Figure 1. Domains that influence evidence-based decision-making (Satterfield et al., 2009)

As Figure 1 suggests, at the project level, as National Science Foundation (NSF) ATE principal investigators work (PIs), evaluators can assist PIs in making project design and implementation decisions based on the best available research evidence, considering participant, environmental, and organizational dimensions. For example, researchers and evaluators work together to compile the best research evidence about specific populations (e.g., underrepresented minorities) in which interventions can thrive. Then, they establish mutually beneficial researcher-practitioner partnerships to make decisions based on their practical expertise and current experiences in the field.

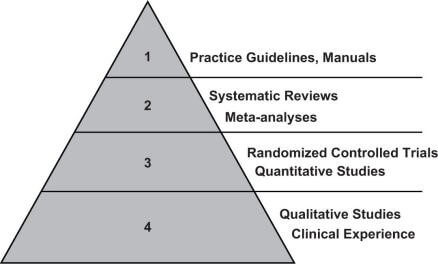

At the NSF ATE program level, program officers often review and qualitatively categorize project outcomes provided by project teams, including their evaluators, as shown in Figure 2.

Figure 2. Quality of Evidence Pyramid (Paynter, 2009)

Figure 2. Quality of Evidence Pyramid (Paynter, 2009)

As Figure 2 suggests, aggregated project outcomes tell a story about what the ATE community has learned and needs to know about advancing technician education. At the highest levels of evidence, program officers strive to obtain strong evidence that can lead to best practice guidelines and manuals grounded by quantitative studies and trials, and enhanced by rich and in-depth qualitative studies and clinical experiences. Evaluators can meet PIs’ and program officers’ evidence needs with project-level formative and summative feedback (such as outcomes and impact evaluations) and program-level data, such as outcome estimates from multiple studies (i.e., meta-analyses of project outcome studies). Through these complementary sources of evidence, evaluators facilitate the sharing of the most promising interventions and best practices.

In this call to action, we charge PIs and evaluators with working closely together to ensure that project outcomes are clearly identified and supported by evidence that benefits the ATE community’s knowledge base. Evaluators’ roles include guiding leaders to 1) identify new or promising strategies for making evidence-based decisions; 2) use or transform current data for making informed decisions; and when needed, 3) document how assessment and evaluation strengthen evidence gathering and decision-making.

References:

Paynter, R. A. (2009). Evidence-based research in the applied social sciences. Reference Services Review, 37(4), 435–450. doi:10.1108/00907320911007038

Satterfield, J., Spring, B., Brownson, R., Mullen, E., Newhouse, R., Walker, B., & Whitlock, E. (2009). Toward a transdisciplinary model of evidence-based practice. The Milbank Quarterly, 86, 368–390.

Except where noted, all content on this website is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

EvaluATE is supported by the National Science Foundation under grant number 2332143. Any opinions, findings, and conclusions or recommendations expressed on this site are those of the authors and do not necessarily reflect the views of the National Science Foundation.

EvaluATE is supported by the National Science Foundation under grant number 2332143. Any opinions, findings, and conclusions or recommendations expressed on this site are those of the authors and do not necessarily reflect the views of the National Science Foundation.